AI – its risks and possible side effects

The Ethics Council advises the German government – and warns against indiscriminate enthusiasm about the use of artificial intelligence.

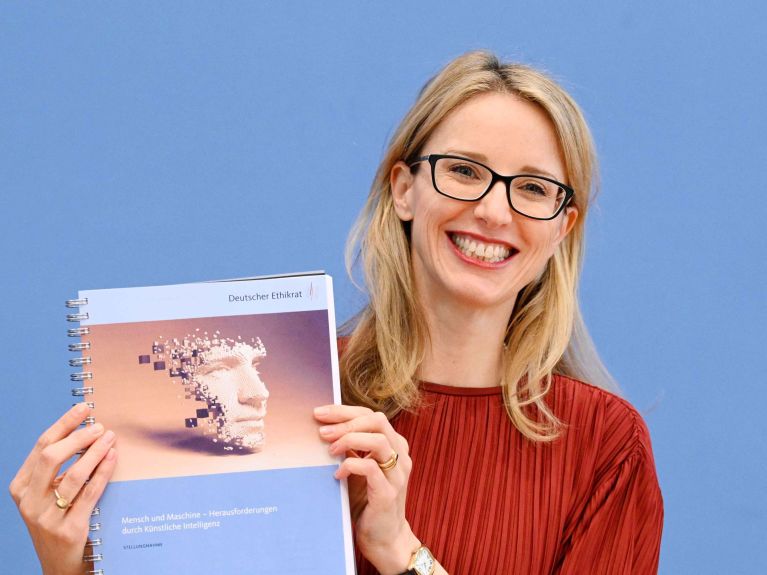

Artificial intelligence is ubiquitous. “It can be used to diagnose cancer or practise English vocab with pupils at school, but also to determine who should receive certain social welfare benefits or to influence our behaviour on social media,” explains Professor Alena Buyx, chairperson of the German Ethics Council. Simplifying processes, making life easier for people, identifying diseases - that all sounds like a brave new world. The Ethics Council, commissioned by the Bundestag in 2020 to study the opportunities and risks of AI, does not envisage only a rosy future, however. “AI must not be allowed to replace human beings,” says the chair of the council of scientists from different disciplines that advises policymakers and the government on ethical questions. Buyx sums up the council’s key findings as follows: “The use of AI must widen rather than limit human scope for development, authorship and action.”

AI must not be allowed to replace human beings.

In a 287-page publication entitled “Humans and Machines”, the Ethics Council has documented the risks and opportunities that it associates with the new technologies. The report (in German) was presented in Berlin in mid-March. One important job of the Ethics Council is to critically review new developments and to warn against dangers. This is what it does in its expert report. Judith Simon, a professor of ethics in information technology at Universität Hamburg, says that entrusting tasks to AI should essentially be aimed at broadening human abilities. The challenge therefore is “to prevent any diminishing of human action capability and authorship and any diffusion of responsibility.”

Responsibility cannot be delegated to machines.

Professor Julian Nida-Rümelin, former minister of state for culture in Germany, also stresses the importance of human responsibility. This cannot “be delegated to machines or shared with machines”. Especially when it comes to the use of artificial intelligence in the media and in communication, as well as in search engines, news services and social media, the Ethics Council sees considerable dangers looming if AI is not used transparently. Personalised offers in particular can convey the impression of an objective choice but in fact reflect the user’s previous behavioural patterns that the AI has analysed. This is because private firms have a commercial interest in binding users to them. “Consequently, the range of information and opinion on offer becomes more narrow,” warns Nida-Rümelin. One way to counter this in the opinion of the Ethics Council could be to establish a digital communication structure regulated by public law as an alternative to the platforms of private digital corporations. As Nida-Rümelin explains, this should not be confused with public broadcasters in Germany, as such platforms could for example be operated by public law foundations without any significant influence from the state.

Would you like to receive regular updates about Germany? Sign up here: